How Do I Know It's Working? Advanced Page Cache Edition

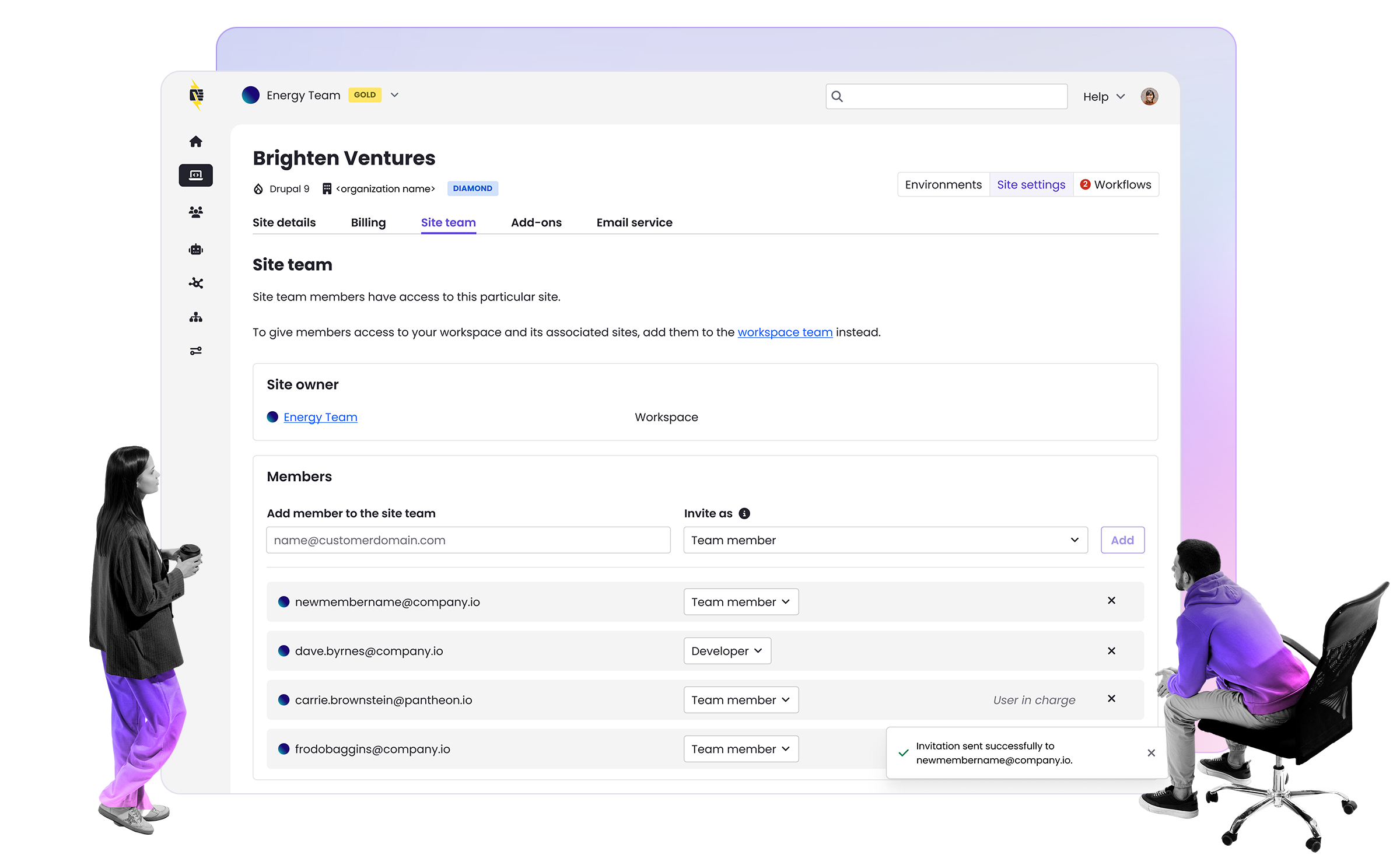

Image

Much of my day to day work as an ACE at Pantheon is spent confirming that certain development workflow tasks are possible on the platform. Lately, my preferred way of showing that something is possible—and stays possible—is by writing an automated test. Last summer when I wrote blog posts about Drupal 7 to Drupal 8 migrations, I also made a small GitHub repo with some CircleCI tests. That CircleCI script takes a fresh Drupal 8 site, configures migrations, runs them, and checks the result with Behat. That script runs every night and helps me know that D7 > D8 migrations are still possible on Pantheon.

Advanced Page Cache

With the release of the Pantheon Advanced Page Cache module, which I wrote about last month, I wanted automated tests to demonstrate fine grain control of the Surrogate Keys (Cache Metadata) sent by a given site. To do this, I used the demo module that comes with Views Custom Cache Tags. I think this module will be used by most every site that wants granular control of their Surrogate Keys. The demo module adds a View which varies cache tags by node type. The basic idea here is that saving a page node should clear a listing of page nodes while leaving a listing of other node types in the cache.

It also implements hook_entity_presave to do the clear of that tag with every node that is saved.

/*** Implements hook_node_presave().*/function views_custom_cache_tag_demo_node_presave(NodeInterface $node) { $cache_tag = 'node:type:' . $node->getType(); Cache::invalidateTags(array($cache_tag));}

Manually Checking Cache Tags

To manually see what is going on, I like to use the curl command line utility with the -I flag to inspect the HTTP headers for different URLs:

curl -IH "Pantheon-Debug:1" http://83-d8papc.pantheonsite.io/custom-cache-tags/page | egrep 'Surrogate-Key-Raw|Age'Surrogate-Key-Raw: block_view config:block.block.bartik_account_menu config:block.block.bartik_branding config:block.block.bartik_breadcrumbs config:block.block.bartik_content config:block.block.bartik_footer config:block.block.bartik_help config:block.block.bartik_local_actions config:block.block.bartik_local_tasks config:block.block.bartik_main_menu config:block.block.bartik_messages config:block.block.bartik_page_title config:block.block.bartik_powered config:block.block.bartik_search config:block.block.bartik_tools config:block_emit_list config:color.theme.bartik config:search.settings config:system.menu.account config:system.menu.footer config:system.menu.main config:system.menu.tools config:system.site config:user.role.anonymous config:views.view.view_node_type_ab http_response node:2 node:type:page node_view rendered user:1Age: 7

As you can see, Drupal 8 adds a ton of Surrogate Keys. I've bolded the one added by our Views configuration from the previous section. You can see it follows the pattern we defined.

The other header to look at is "Age.” This is added by the Pantheon Global CDN, and shows the number of seconds that this page has been cached. A nonzero result means a cache hit.

If I create a new page node (or resave an existing one) and try curling again, the Age resets to 0 because Drupal knew to clear the View that listed all page-type nodes:

curl -IH "Pantheon-Debug:1" http://83-d8papc.pantheonsite.io/custom-cache-tags/page | egrep 'Age'Age: 0

However, the cached list of article nodes is unaffected:

curl -IH "Pantheon-Debug:1" http://83-d8papc.pantheonsite.io/custom-cache-tags/article | egrep 'Age'Age: 30

The system works!

A Behat Scenario

As much fun as it is to curl for HTTP headers, I don't want to be doing that manually to test over the long term. I'd rather write a more readable version in Behat.

Scenario: Node type-based clearingScenario: Node type-based expirationGiven there are some "page" nodesAnd "custom-cache-tags/page" is cachingAnd there are some "article" nodesAnd "custom-cache-tags/article" is cachingWhen a generate a "page" nodeThen "custom-cache-tags/page" has been purgedAnd "custom-cache-tags/page" is cachingAnd "custom-cache-tags/article" has not been purged

With this Behat scenario I confirm that adding a new page node causes this listing of all page nodes to be purged from cache but that the cache of articles remains intact. The goal with this level of granularity is to ensure that everything that can stay cached does stay cached. And everything that needs to be cleared does get cleared.

Custom Behat Step Definitions

When I first saw Behat steps I thought they seemed too good to be true. How does a simple sentence fragment like "And "custom-cache-tags/page" is caching" actually get evaluated? For this module I wrote a few custom step definitions like this one:

/** * @Given :page is caching */ public function pageIsCaching($page) { $age = $this->getAge($page); // A zero age doesn't necessarily mean the page is not caching. // A second request may show a higher age. if (!empty($age)) { return true; } else { sleep(2); $age = $this->getAge($page); if (empty($age)) { throw new \Exception('not cached'); } else { return true; } } }

That method basically does the same thing as the manual curls shown earlier. It makes two requests for a URL with a two-second pause in between, and looks at the "Age" HTTP header to determine if it is caching or not.

I followed a similar process for the “has been purged” step definition. You can see the full source for the custom Behat test contexts here.

What about real sites with lots of content types and Views?

It's easy enough to write a handful of Behat steps to verify the behavior of one View and two content types. But what if I had ten content types as many real sites do? Plenty of real sites have dozens of content types.

So here's my question for you, yes you! How do you want to verify that all of your caches are doing what you expect? Is it good enough to do some manual checking or do you want an automated test that can guide implementation during development and protect against regression on an ongoing basis? If you did want automated tests for the caching behavior of site with numerous entity types and builds and numerous variations of pages, what would your ideal test look like?

I have some ideas for making Behat's structure scale better as well as some thoughts about other testing frameworks. But first I'd love to hear from you here in the comments or in the Pantheon Community and Power Users groups.

You may also like: