What WordPress & Drupal Developers Need to Know about SEO

Image

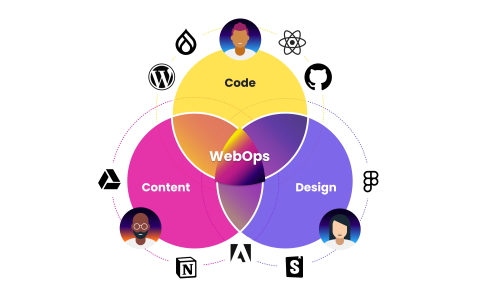

Why should web developers care about SEO? It might seem like the very essence of “not my job.” Developers build the site. Content creators fill the site with content. The quality of the content, plus the content creators’ amplification efforts is where SEO lives. Right?

The fact is, though, web developers can do a great deal to increase a site’s search engine visibility. Which means that if we view SEO as someone else’s job, we’re holding content creators—and website performance—back.

There are three stages of SEO:

Content needs to be found

Content needs to be indexed

Content needs to be high-quality

The first two are squarely the development team’s responsibility. To help Drupal and WordPress developers meet that responsibility, I recently had the privilege of hosting a webinar with Yoast Co-Founder & CEO Joost de Valk. From that talk, here are the five key ways that web developers should be contributing to SEO.

What WordPress & Drupal Developers Need to Know about SEO

Information Architecture and Site Maps

Your site’s architecture will greatly influence how search engines index the site. Before you start building a new site, it’s important to consider:

What types of content will be on the site

How content will be organized

How visitors will travel through the site

Joost advises that every page on the site should have a “click path,” a way for people to get to that page through navigation, including a navigation menu and internal crosslinking. It’s tempting to rely on an internal search function, but bots won’t use it. Your site should have click paths through the content.

When you inherit a site without that clear structure, creating the structure should be a priority. Make your site more navigable for humans, and it will be more navigable for search engines and other modes of access.

You should create an XML sitemap as well. That index will enable you to use Google Search Console to see how your site is performing.

Robots.txt

The robots.txt file is one the most powerful, least understood ways for developers to interact with search engines. It’s a small text file that is the first thing bots check when they come to your site. The contents of the file tell bots what pages or directories they are allowed to crawl.

Note that disallowing a page will not prevent it from showing up in search results; it will just keep bots from crawling the page. If enough third-party sources link to a disallowed page, it can still show up in rankings. Use the NOINDEX meta tag for pages that you want to completely remove from search.

It’s best to make sparing use of robots.txt - some developers put virtually everything in there, but it should ideally be used only for sections of your site that aren’t your primary focus or your best content.

Joost and his team at Yoast have a great guide to robots.txt every developer should read. It explains when—and when not—to add a page to the file.

JavaScript & SEO

It used to be that JavaScript objects were virtually opaque to bots - they would crawl the HTML and text of a site, but didn’t render the entire page. Now, however, Google does render your page in its entirety, so JavaScript objects can contribute to SEO.

However, Google stops rendering your page after roughly 5 seconds. So it’s worth making sure your most valuable content will render during that window. (You should make sure your site renders in under 5 seconds for lots of reasons, mind you, but the reality of some Javascript-heavy sites is that they don’t). When in doubt, use Google Search Console to fetch and render a page, and you can see what the bot is seeing. Then check your analytics to make sure sub-pages you’re linking to in JavaScript are being indexed.

HTML Elements

There are some HTML tags that are exclusively for your content creators to worry about: Titles, meta descriptions, h2 tags, etc. These three, however, are useful from the development side.

Structured Data is rising in importance for Google and other tools. These tags help Google identify elements of a page, like the page’s author, what type of content it is, etc. That information can influence ranking and help your site get featured in a snippet. Envato has a nice Introduction to Structured Data Markup to help you get started

The Canonical Tag is a way for you to prioritize a specific URL for content that might be duplicated--it tells Google “This is the version of this URL that I want in the index.” For instance, if you post a link to Facebook and Twitter with different UTM tags, Canonical would help prioritize the cleanest version of the URL. Check Yoast’s canonical guide for why and how to use the tag.

Hreflang is a tag that lets you tell Google what language a page is in. It’s a simple idea with a fairly convoluted execution, but if your site has multiple languages, it’s worth checking out Google’s hreflang guide.

Performance & Security

Your site’s speed and responsiveness has always been a crucial part of the end user experience, and search engines know this too. Google currently considers speed and uptime as ranking factors. It makes sense: Google’s goal is to recommend pages that will meet consumer’s needs, and consumers have very little patience for a slow-loading site.

Security is another element that is gaining importance as a ranking factor. Google announced back in 2014 that HTTPS would be a ranking signal in search, and has steadily prioritized sites with HTTPS over unsecure sites. It’s not optional anymore—for many reasons, including SEO, HTTPS should be standard.

The easiest way to handle these issues is to choose a host with a sterling reputation for performance and uptime. Pantheon not only delivers that responsiveness, we also include HTTPS with our Global CDN, for every account on our platform.

SEO: It’s Everyone’s Job

As web developers, we want the sites we design to be seen. Search engine visibility is a major component of bringing in that audience. SEO is not just for the content creators. The way we design and implement a site drastically affects the site’s rankings, for better or for worse. Let’s make sure it’s for the better.