AI Era Mythbusters: Bad Bot Edition

It’s hard to trust third-hand reporting in the middle of the AI hype cycle. Paid influencer activity and paper-thin marketing are everywhere. I have started discounting new claims of model or agent capabilities unless they come from someone I personally trust, or better yet, can verify independently.

Sadly, the same is true for web traffic. This blog is the first in a series about navigating the hype cycle and finding true signals in the noise.

Bot Traffic FUD

I’ve seen some people throwing around stats saying over half of all traffic is inorganic, and 99% of it comes from “bad bots.” They’re painting a picture of a web where every site is under siege. That’s Fear.

Conversely, I see people saying that we can’t identify with confidence whether something is a bot, suggesting it’s impossible to understand what’s driving changes in web traffic trends. That’s Uncertainty.

The most extreme take of all is, of course, “The Dead Internet Theory,” the dystopian feeling that nothing online is real, that the slop-ocalypse has come; it’s just bots posting for bots, and human engagement exists only on a spectrum of bot-like algorithm-pleasing to outright grifting. That’s Doubt.

While I empathize with people in distress about all these things, FUD only fuels that fire. Digital marketing and web development are both going through existential crises, and the last thing we need is more angst.

So I’m pushing back. Pantheon serves over a billion unique visitors monthly, giving us a view into a statistically significant portion of all web traffic. I’ve got the raw data, direct from the clickstream. It’s time to bust some myths.

Today’s Myth: You Can’t Identify Bots

Proving beyond a reasonable doubt whether a web request resulted from human intent is hard. Validation isn’t built into the protocol, and there are plenty of ways to “trick” people into creating traffic. Click farms and ad fraud are long-standing and still growing concerns. But many people have taken the relative looseness of how the open web works to the extreme of saying that nothing can be trusted. In practice, this is false.

When any piece of software requests a web page, it identifies itself with a “user-agent header,” which is just a bit of text usually containing the browser version, operating system, etc. Bots and crawlers use this field to tell you who they are and why they’re there.

It’s just text. A developer can make it say anything. As such, there’s been some scare-mongering about nefarious actors impersonating “good bots” to cover for bad actions, but diving into the data, I see no evidence of significant user-agent fraud along those lines.

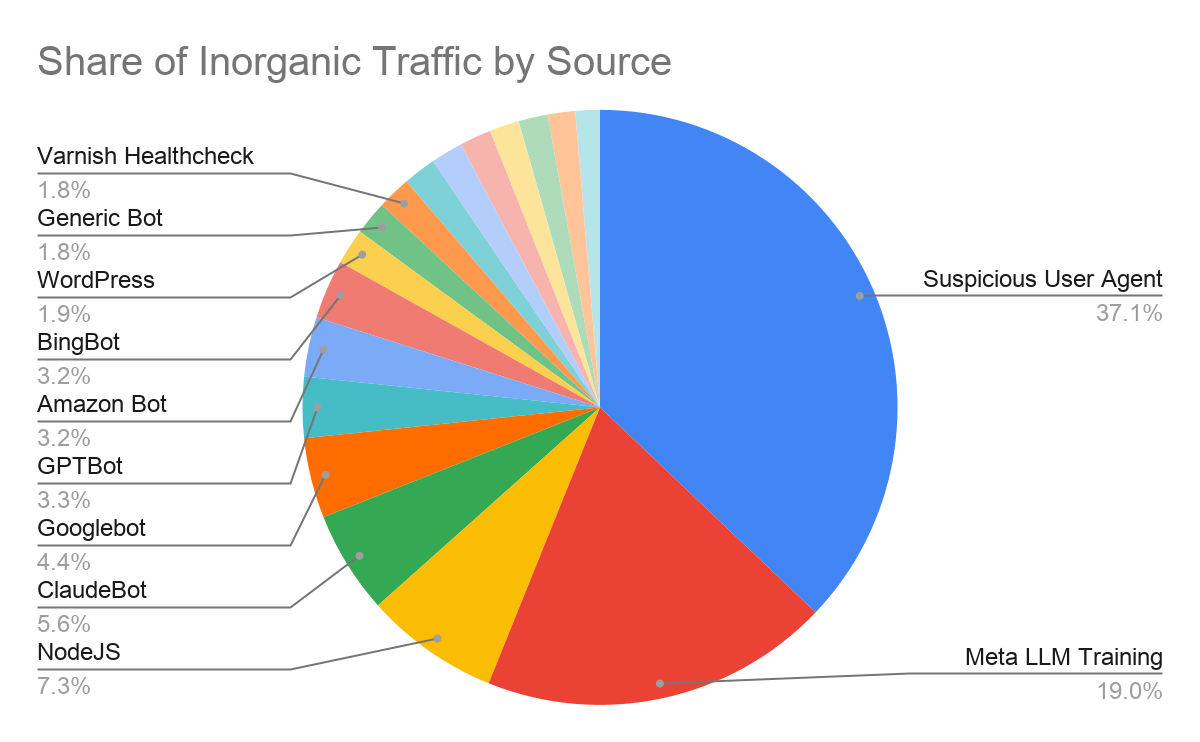

This analysis examined the unfiltered clickstream across the Pantheon platform, tallying up successful requests for web pages where there was either a self-identified bot user agent, or else an unusually high ratio of requests per source, indicating that the source may be spidering (or attempting to attack) sites on the platform.

Here’s the breakdown of (potentially) inorganic traffic from the analysis:

To get at this “stealth bot” problem, I compared the total requests from known bot user agents with those that had a non-bot user agent but a high rate of page requests. This is the “suspicious” bucket.

This represents just over a third of all traffic in the sample. Don’t get me wrong, that’s a lot, but it’s also a far cry from the 99% FUD metric. It’s also not 37% of all requests since we’re singling out potentially inorganic traffic patterns, and when you look deeper into this bucket, it turns out to be a bit of a mixed bag.

For instance, one source self-identified as a very outdated browser that requested 140M pages from just 44 network addresses in a few days. That’s… probably not human.

Others are harder to pin down and could be the result of desired or authentic activity. For instance, corporate networks and VPNs collapse the apparent source of traffic to just a few IPs, and IT-managed software can result in a huge number of employees all running the exact same (though more current) version of Chrome.

Finally, it’s worth noting that decoupled frontends with NodeJS collectively accounted for more requests than any crawler other than Meta (who have really been on a tear lately).

The headline takeaway is that most bot traffic appears legitimate. While Google used to be the big dog, they’re now one of a pack of players that are aggressively indexing the web, a trend that’s only likely to intensify. But most site owners want this traffic — it’s key to discovery, and to getting their information out to the wider internet.

Metrics for Machines and Humans

Website consumption is going up, but traffic patterns are changing. There are at least five major crawlers active now around the clock, when there used to be one. It’s also easier than ever for someone to vibe-code their own crawler and start messing around, which is clearly also a thing. There may also be crawlers operating in stealth.

That said, the data doesn’t support the claim that web traffic is any more fake now than it used to be. Authentic human use of the web isn’t in decline, and if you think in terms of visits instead of raw page consumption, the really interesting thing to watch are Agentic requests as part of a workflow or chat session.

The all-consuming crawls for training data dominate the numbers, but a live search from Claude is a machine making a request as part of a human process. This is new and interesting. Exciting, even.

What’s challenging, however, is that these changes in consumption are not under the control of site owners, and sometimes they have negative side effects. Heavy volumetric crawling puts a load on sites, sometimes even creating issues with stability. There can be commercial impacts as well.

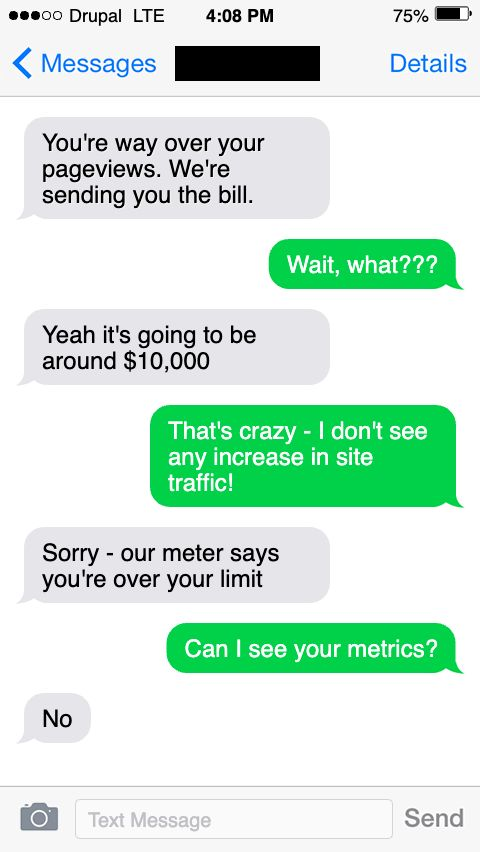

I know from talking to peers and partners that crawler traffic is triggering uncomfortable conversations around utilization and overages, especially with vendors that price and sell on pure consumption. It doesn’t feel great getting charged more for increased usage when there’s not much tangible value associated.

It feels even worse to find out that your vendor charges you for your own security monitoring or uptime measurement. I’ve spoken to folks out there who are stuck with no-fun debates between procurement and compliance departments, because they finally figured out a security scanner was causing overage charges and started blocking it. Those aren’t trade-offs you want to be making.

If you’re having interactions that feel like this:

We can help. Our approach to billing pre-excludes identified bot traffic, including AI labs, uptime monitors, and security or accessibility scanners. We only count successful requests for web pages and price around unique visits not raw pages served, which better aligns price and value.

Most of all, we’re committed to working with customers well ahead of contract renewals when there are issues with sizing. Our goal is to never send a surprise bill from Pantheon. Let us know if you’re interested in hearing how we do that.

For everyone else, stay tuned for more mythbusting. Now that the sample data is primed, more deep-diving is in the cards! Up next: what can we tell about “stealth crawlers,” and what explains the growing gap between 1st-party platform metrics vs tag-based analytics like GA4. See you next time!